- java.lang.Object

-

- io.reactivex.rxjava3.parallel.ParallelFlowable<T>

-

- Type Parameters:

T- the value type

public abstract class ParallelFlowable<T> extends Object

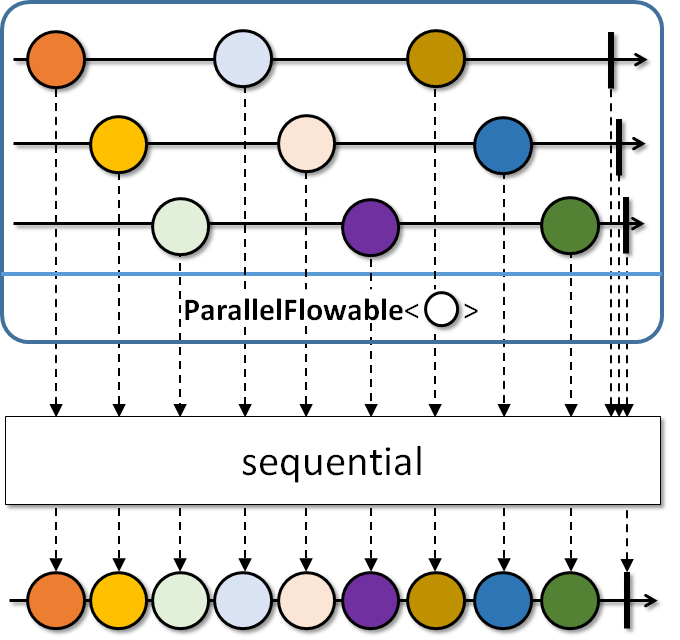

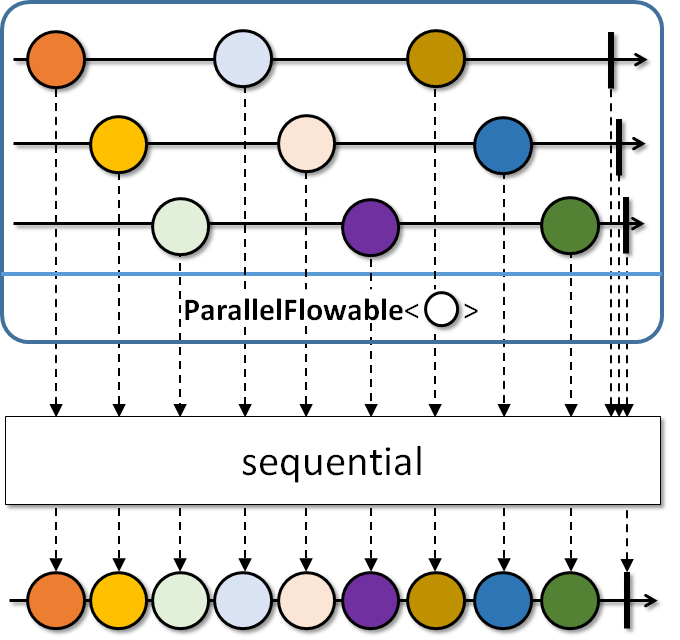

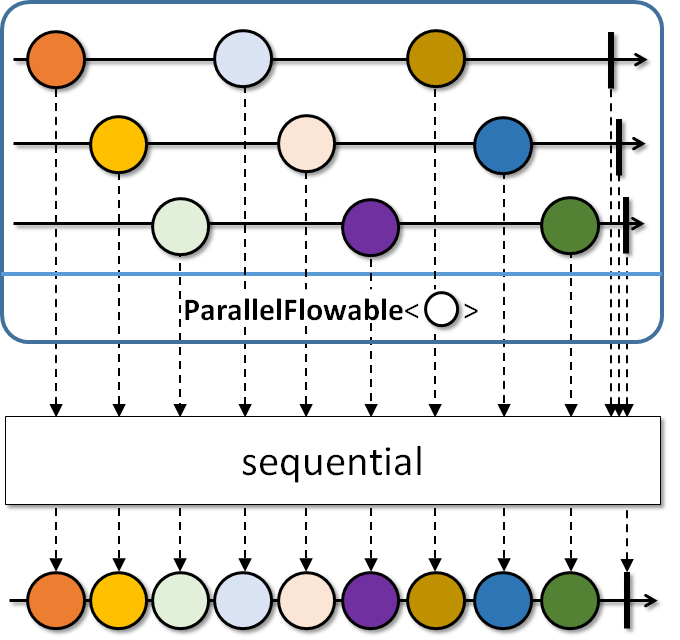

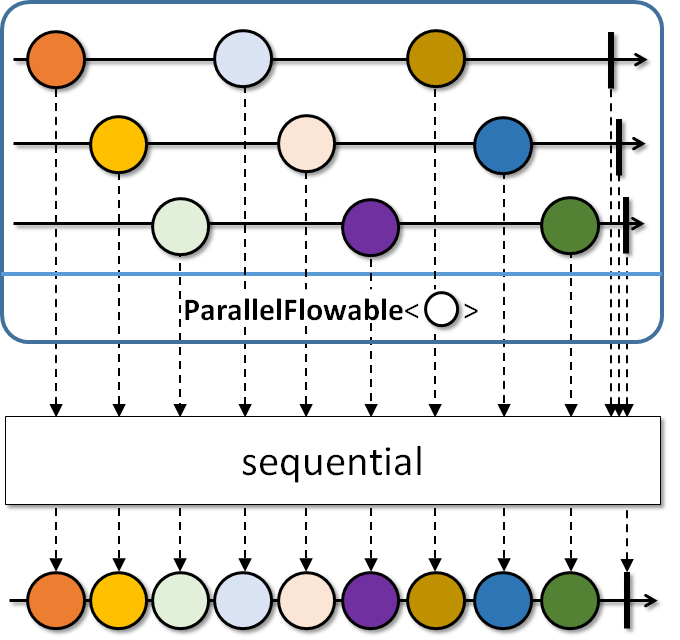

Abstract base class for parallel publishing of events signaled to an array ofSubscribers.Use

from(Publisher)to start processing a regularPublisherin 'rails'. UserunOn(Scheduler)to introduce where each 'rail' should run on thread-vise. Usesequential()to merge the sources back into a singleFlowable.History: 2.0.5 - experimental; 2.1 - beta

- Since:

- 2.2

-

-

Constructor Summary

Constructors Constructor and Description ParallelFlowable()

-

Method Summary

All Methods Static Methods Instance Methods Abstract Methods Concrete Methods Modifier and Type Method and Description <A,R> @NonNull Flowable<R>collect(@NonNull Collector<T,A,R> collector)<C> @NonNull ParallelFlowable<C>collect(@NonNull Supplier<? extends C> collectionSupplier, @NonNull BiConsumer<? super C,? super T> collector)Collect the elements in each rail into a collection supplied via acollectionSupplierand collected into with a collector action, emitting the collection at the end.<U> @NonNull ParallelFlowable<U>compose(@NonNull ParallelTransformer<T,U> composer)Allows composing operators, in assembly time, on top of thisParallelFlowableand returns anotherParallelFlowablewith composed features.<R> @NonNull ParallelFlowable<R>concatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper)Generates and concatenatesPublishers on each 'rail', signalling errors immediately and generating 2 publishers upfront.<R> @NonNull ParallelFlowable<R>concatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, int prefetch)Generates and concatenatesPublishers on each 'rail', signalling errors immediately and using the given prefetch amount for generatingPublishers upfront.<R> @NonNull ParallelFlowable<R>concatMapDelayError(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean tillTheEnd)Generates and concatenatesPublishers on each 'rail', optionally delaying errors and generating 2 publishers upfront.<R> @NonNull ParallelFlowable<R>concatMapDelayError(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, int prefetch, boolean tillTheEnd)Generates and concatenatesPublishers on each 'rail', optionally delaying errors and using the given prefetch amount for generatingPublishers upfront.@NonNull ParallelFlowable<T>doAfterNext(@NonNull Consumer<? super T> onAfterNext)Call the specified consumer with the current element passing through any 'rail' after it has been delivered to downstream within the rail.@NonNull ParallelFlowable<T>doAfterTerminated(@NonNull Action onAfterTerminate)Run the specifiedActionwhen a 'rail' completes or signals an error.@NonNull ParallelFlowable<T>doOnCancel(@NonNull Action onCancel)Run the specifiedActionwhen a 'rail' receives a cancellation.@NonNull ParallelFlowable<T>doOnComplete(@NonNull Action onComplete)Run the specifiedActionwhen a 'rail' completes.@NonNull ParallelFlowable<T>doOnError(@NonNull Consumer<? super Throwable> onError)Call the specified consumer with the exception passing through any 'rail'.@NonNull ParallelFlowable<T>doOnNext(@NonNull Consumer<? super T> onNext)Call the specified consumer with the current element passing through any 'rail'.@NonNull ParallelFlowable<T>doOnNext(@NonNull Consumer<? super T> onNext, @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)Call the specified consumer with the current element passing through any 'rail' and handles errors based on the returned value by the handler function.@NonNull ParallelFlowable<T>doOnNext(@NonNull Consumer<? super T> onNext, @NonNull ParallelFailureHandling errorHandler)Call the specified consumer with the current element passing through any 'rail' and handles errors based on the givenParallelFailureHandlingenumeration value.@NonNull ParallelFlowable<T>doOnRequest(@NonNull LongConsumer onRequest)Call the specified consumer with the request amount if any rail receives a request.@NonNull ParallelFlowable<T>doOnSubscribe(@NonNull Consumer<? super Subscription> onSubscribe)Call the specified callback when a 'rail' receives aSubscriptionfrom its upstream.@NonNull ParallelFlowable<T>filter(@NonNull Predicate<? super T> predicate)Filters the source values on each 'rail'.@NonNull ParallelFlowable<T>filter(@NonNull Predicate<? super T> predicate, @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)Filters the source values on each 'rail' and handles errors based on the returned value by the handler function.@NonNull ParallelFlowable<T>filter(@NonNull Predicate<? super T> predicate, @NonNull ParallelFailureHandling errorHandler)Filters the source values on each 'rail' and handles errors based on the givenParallelFailureHandlingenumeration value.<R> @NonNull ParallelFlowable<R>flatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper)Generates and flattensPublishers on each 'rail'.<R> @NonNull ParallelFlowable<R>flatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError)Generates and flattensPublishers on each 'rail', optionally delaying errors.<R> @NonNull ParallelFlowable<R>flatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError, int maxConcurrency)Generates and flattensPublishers on each 'rail', optionally delaying errors and having a total number of simultaneous subscriptions to the innerPublishers.<R> @NonNull ParallelFlowable<R>flatMap(@NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError, int maxConcurrency, int prefetch)Generates and flattensPublishers on each 'rail', optionally delaying errors, having a total number of simultaneous subscriptions to the innerPublishers and using the given prefetch amount for the innerPublishers.<U> @NonNull ParallelFlowable<U>flatMapIterable(@NonNull Function<? super T,? extends Iterable<? extends U>> mapper)Returns aParallelFlowablethat merges each item emitted by the source on each rail with the values in anIterablecorresponding to that item that is generated by a selector.<U> @NonNull ParallelFlowable<U>flatMapIterable(@NonNull Function<? super T,? extends Iterable<? extends U>> mapper, int bufferSize)Returns aParallelFlowablethat merges each item emitted by the sourceParallelFlowablewith the values in anIterablecorresponding to that item that is generated by a selector.<R> @NonNull ParallelFlowable<R>flatMapStream(@NonNull Function<? super T,? extends Stream<? extends R>> mapper)Maps each upstream item on each rail into aStreamand emits theStream's items to the downstream in a sequential fashion.<R> @NonNull ParallelFlowable<R>flatMapStream(@NonNull Function<? super T,? extends Stream<? extends R>> mapper, int prefetch)Maps each upstream item of each rail into aStreamand emits theStream's items to the downstream in a sequential fashion.static <T> @NonNull ParallelFlowable<T>from(@NonNull Publisher<? extends T> source)Take aPublisherand prepare to consume it on multiple 'rails' (number of CPUs) in a round-robin fashion.static <T> @NonNull ParallelFlowable<T>from(@NonNull Publisher<? extends T> source, int parallelism)Take aPublisherand prepare to consume it on parallelism number of 'rails' in a round-robin fashion.static <T> @NonNull ParallelFlowable<T>from(@NonNull Publisher<? extends T> source, int parallelism, int prefetch)Take aPublisherand prepare to consume it on parallelism number of 'rails' , possibly ordered and round-robin fashion and use custom prefetch amount and queue for dealing with the sourcePublisher's values.static <T> @NonNull ParallelFlowable<T>fromArray(Publisher<T>... publishers)Wraps multiplePublishers into aParallelFlowablewhich runs them in parallel and unordered.<R> @NonNull ParallelFlowable<R>map(@NonNull Function<? super T,? extends R> mapper)Maps the source values on each 'rail' to another value.<R> @NonNull ParallelFlowable<R>map(@NonNull Function<? super T,? extends R> mapper, @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)Maps the source values on each 'rail' to another value and handles errors based on the returned value by the handler function.<R> @NonNull ParallelFlowable<R>map(@NonNull Function<? super T,? extends R> mapper, @NonNull ParallelFailureHandling errorHandler)Maps the source values on each 'rail' to another value and handles errors based on the givenParallelFailureHandlingenumeration value.<R> @NonNull ParallelFlowable<R>mapOptional(@NonNull Function<? super T,Optional<? extends R>> mapper)Maps the source values on each 'rail' to an optional and emits its value if any.<R> @NonNull ParallelFlowable<R>mapOptional(@NonNull Function<? super T,Optional<? extends R>> mapper, @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)Maps the source values on each 'rail' to an optional and emits its value if any and handles errors based on the returned value by the handler function.<R> @NonNull ParallelFlowable<R>mapOptional(@NonNull Function<? super T,Optional<? extends R>> mapper, @NonNull ParallelFailureHandling errorHandler)Maps the source values on each 'rail' to an optional and emits its value if any and handles errors based on the givenParallelFailureHandlingenumeration value.abstract intparallelism()Returns the number of expected parallelSubscribers.@NonNull Flowable<T>reduce(@NonNull BiFunction<T,T,T> reducer)Reduces all values within a 'rail' and across 'rails' with a reducer function into oneFlowablesequence.<R> @NonNull ParallelFlowable<R>reduce(@NonNull Supplier<R> initialSupplier, @NonNull BiFunction<R,? super T,R> reducer)Reduces all values within a 'rail' to a single value (with a possibly different type) via a reducer function that is initialized on each rail from aninitialSuppliervalue.@NonNull ParallelFlowable<T>runOn(@NonNull Scheduler scheduler)Specifies where each 'rail' will observe its incoming values, specified via aScheduler, with no work-stealing and default prefetch amount.@NonNull ParallelFlowable<T>runOn(@NonNull Scheduler scheduler, int prefetch)Specifies where each 'rail' will observe its incoming values, specified via aScheduler, with possibly work-stealing and a given prefetch amount.@NonNull Flowable<T>sequential()Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a default prefetch value for the rails.@NonNull Flowable<T>sequential(int prefetch)Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a give prefetch value for the rails.@NonNull Flowable<T>sequentialDelayError()Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a default prefetch value for the rails and delaying errors from all rails till all terminate.@NonNull Flowable<T>sequentialDelayError(int prefetch)Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a give prefetch value for the rails and delaying errors from all rails till all terminate.@NonNull Flowable<T>sorted(@NonNull Comparator<? super T> comparator)Sorts the 'rails' of thisParallelFlowableand returns aFlowablethat sequentially picks the smallest next value from the rails.@NonNull Flowable<T>sorted(@NonNull Comparator<? super T> comparator, int capacityHint)Sorts the 'rails' of thisParallelFlowableand returns aFlowablethat sequentially picks the smallest next value from the rails.abstract voidsubscribe(@NonNull Subscriber<? super T>[] subscribers)Subscribes an array ofSubscribers to thisParallelFlowableand triggers the execution chain for all 'rails'.<R> Rto(@NonNull ParallelFlowableConverter<T,R> converter)Calls the specified converter function during assembly time and returns its resulting value.@NonNull Flowable<List<T>>toSortedList(@NonNull Comparator<? super T> comparator)@NonNull Flowable<List<T>>toSortedList(@NonNull Comparator<? super T> comparator, int capacityHint)protected booleanvalidate(@NonNull Subscriber<?>[] subscribers)Validates the number of subscribers and returnstrueif their number matches the parallelism level of thisParallelFlowable.

-

-

-

Method Detail

-

subscribe

@BackpressureSupport(value=SPECIAL) @SchedulerSupport(value="none") public abstract void subscribe(@NonNull @NonNull Subscriber<? super T>[] subscribers)

Subscribes an array ofSubscribers to thisParallelFlowableand triggers the execution chain for all 'rails'.- Backpressure:

- The backpressure behavior/expectation is determined by the supplied

Subscriber. - Scheduler:

subscribedoes not operate by default on a particularScheduler.

- Parameters:

subscribers- the subscribers array to run in parallel, the number of items must be equal to the parallelism level of thisParallelFlowable- Throws:

NullPointerException- ifsubscribersisnull- See Also:

parallelism()

-

parallelism

@CheckReturnValue public abstract int parallelism()

Returns the number of expected parallelSubscribers.- Returns:

- the number of expected parallel

Subscribers

-

validate

protected final boolean validate(@NonNull @NonNull Subscriber<?>[] subscribers)

Validates the number of subscribers and returnstrueif their number matches the parallelism level of thisParallelFlowable.- Parameters:

subscribers- the array ofSubscribers- Returns:

trueif the number of subscribers equals to the parallelism level- Throws:

NullPointerException- ifsubscribersisnullIllegalArgumentException- ifsubscribers.lengthis different fromparallelism()

-

from

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=FULL) public static <T> @NonNull ParallelFlowable<T> from(@NonNull @NonNull Publisher<? extends T> source)

Take aPublisherand prepare to consume it on multiple 'rails' (number of CPUs) in a round-robin fashion.- Backpressure:

- The operator honors the backpressure of the parallel rails and

requests

Flowable.bufferSize()amount from the upstream, followed by 75% of that amount requested after every 75% received. - Scheduler:

fromdoes not operate by default on a particularScheduler.

- Type Parameters:

T- the value type- Parameters:

source- the sourcePublisher- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifsourceisnull

-

from

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=FULL) public static <T> @NonNull ParallelFlowable<T> from(@NonNull @NonNull Publisher<? extends T> source, int parallelism)

Take aPublisherand prepare to consume it on parallelism number of 'rails' in a round-robin fashion.- Backpressure:

- The operator honors the backpressure of the parallel rails and

requests

Flowable.bufferSize()amount from the upstream, followed by 75% of that amount requested after every 75% received. - Scheduler:

fromdoes not operate by default on a particularScheduler.

- Type Parameters:

T- the value type- Parameters:

source- the sourcePublisherparallelism- the number of parallel rails- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifsourceisnullIllegalArgumentException- ifparallelismis non-positive

-

from

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=FULL) public static <T> @NonNull ParallelFlowable<T> from(@NonNull @NonNull Publisher<? extends T> source, int parallelism, int prefetch)

Take aPublisherand prepare to consume it on parallelism number of 'rails' , possibly ordered and round-robin fashion and use custom prefetch amount and queue for dealing with the sourcePublisher's values.- Backpressure:

- The operator honors the backpressure of the parallel rails and

requests the

prefetchamount from the upstream, followed by 75% of that amount requested after every 75% received. - Scheduler:

fromdoes not operate by default on a particularScheduler.

- Type Parameters:

T- the value type- Parameters:

source- the sourcePublisherparallelism- the number of parallel railsprefetch- the number of values to prefetch from the source the source until there is a rail ready to process it.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifsourceisnullIllegalArgumentException- ifparallelismorprefetchis non-positive

-

map

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> map(@NonNull @NonNull Function<? super T,? extends R> mapper)

Maps the source values on each 'rail' to another value.Note that the same

mapperfunction may be called from multiple threads concurrently.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into Rs.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull

-

map

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> map(@NonNull @NonNull Function<? super T,? extends R> mapper, @NonNull @NonNull ParallelFailureHandling errorHandler)

Maps the source values on each 'rail' to another value and handles errors based on the givenParallelFailureHandlingenumeration value.Note that the same

mapperfunction may be called from multiple threads concurrently.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into Rs.errorHandler- the enumeration that defines how to handle errors thrown from themapperfunction- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperorerrorHandlerisnull- Since:

- 2.2

-

map

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> map(@NonNull @NonNull Function<? super T,? extends R> mapper, @NonNull @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)

Maps the source values on each 'rail' to another value and handles errors based on the returned value by the handler function.Note that the same

mapperfunction may be called from multiple threads concurrently.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into Rs.errorHandler- the function called with the current repeat count and failureThrowableand should return one of theParallelFailureHandlingenumeration values to indicate how to proceed.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperorerrorHandlerisnull- Since:

- 2.2

-

filter

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final @NonNull ParallelFlowable<T> filter(@NonNull @NonNull Predicate<? super T> predicate)

Filters the source values on each 'rail'.Note that the same predicate may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

filterdoes not operate by default on a particularScheduler.

- Parameters:

predicate- the function returningtrueto keep a value orfalseto drop a value- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifpredicateisnull

-

filter

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final @NonNull ParallelFlowable<T> filter(@NonNull @NonNull Predicate<? super T> predicate, @NonNull @NonNull ParallelFailureHandling errorHandler)

Filters the source values on each 'rail' and handles errors based on the givenParallelFailureHandlingenumeration value.Note that the same predicate may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

filterdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Parameters:

predicate- the function returningtrueto keep a value orfalseto drop a valueerrorHandler- the enumeration that defines how to handle errors thrown from thepredicate- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifpredicateorerrorHandlerisnull- Since:

- 2.2

-

filter

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final @NonNull ParallelFlowable<T> filter(@NonNull @NonNull Predicate<? super T> predicate, @NonNull @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)

Filters the source values on each 'rail' and handles errors based on the returned value by the handler function.Note that the same predicate may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Parameters:

predicate- the function returningtrueto keep a value orfalseto drop a valueerrorHandler- the function called with the current repeat count and failureThrowableand should return one of theParallelFailureHandlingenumeration values to indicate how to proceed.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifpredicateorerrorHandlerisnull- Since:

- 2.2

-

runOn

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="custom") public final @NonNull ParallelFlowable<T> runOn(@NonNull @NonNull Scheduler scheduler)

Specifies where each 'rail' will observe its incoming values, specified via aScheduler, with no work-stealing and default prefetch amount.This operator uses the default prefetch size returned by

Flowable.bufferSize().The operator will call

Scheduler.createWorker()as many times as thisParallelFlowable's parallelism level is.No assumptions are made about the

Scheduler's parallelism level, if theScheduler's parallelism level is lower than theParallelFlowable's, some rails may end up on the same thread/worker.This operator doesn't require the

Schedulerto be trampolining as it does its own built-in trampolining logic.- Backpressure:

- The operator honors the backpressure of the parallel rails and

requests

Flowable.bufferSize()amount from the upstream, followed by 75% of that amount requested after every 75% received. - Scheduler:

runOndrains the upstream rails on the specifiedScheduler'sWorkers.

- Parameters:

scheduler- the scheduler to use- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifschedulerisnull

-

runOn

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="custom") public final @NonNull ParallelFlowable<T> runOn(@NonNull @NonNull Scheduler scheduler, int prefetch)

Specifies where each 'rail' will observe its incoming values, specified via aScheduler, with possibly work-stealing and a given prefetch amount.This operator uses the default prefetch size returned by

Flowable.bufferSize().The operator will call

Scheduler.createWorker()as many times as thisParallelFlowable's parallelism level is.No assumptions are made about the

Scheduler's parallelism level, if theScheduler's parallelism level is lower than theParallelFlowable's, some rails may end up on the same thread/worker.This operator doesn't require the

Schedulerto be trampolining as it does its own built-in trampolining logic.- Backpressure:

- The operator honors the backpressure of the parallel rails and

requests the

prefetchamount from the upstream, followed by 75% of that amount requested after every 75% received. - Scheduler:

runOndrains the upstream rails on the specifiedScheduler'sWorkers.

- Parameters:

scheduler- the scheduler to use that rail's worker has run out of work.prefetch- the number of values to request on each 'rail' from the source- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifschedulerisnullIllegalArgumentException- ifprefetchis non-positive

-

reduce

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final @NonNull Flowable<T> reduce(@NonNull @NonNull BiFunction<T,T,T> reducer)

Reduces all values within a 'rail' and across 'rails' with a reducer function into oneFlowablesequence.Note that the same reducer function may be called from multiple threads concurrently.

- Backpressure:

- The operator honors backpressure from the downstream and consumes

the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

reducedoes not operate by default on a particularScheduler.

- Parameters:

reducer- the function to reduce two values into one.- Returns:

- the new

Flowableinstance emitting the reduced value or empty if the currentParallelFlowableis empty - Throws:

NullPointerException- ifreducerisnull

-

reduce

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> reduce(@NonNull @NonNull Supplier<R> initialSupplier, @NonNull @NonNull BiFunction<R,? super T,R> reducer)

Reduces all values within a 'rail' to a single value (with a possibly different type) via a reducer function that is initialized on each rail from aninitialSuppliervalue.Note that the same mapper function may be called from multiple threads concurrently.

- Backpressure:

- The operator honors backpressure from the downstream rails and consumes

the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

reducedoes not operate by default on a particularScheduler.

- Type Parameters:

R- the reduced output type- Parameters:

initialSupplier- the supplier for the initial valuereducer- the function to reduce a previous output of reduce (or the initial value supplied) with a current source value.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifinitialSupplierorreducerisnull

-

sequential

@BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @CheckReturnValue @NonNull public final @NonNull Flowable<T> sequential()

Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a default prefetch value for the rails.This operator uses the default prefetch size returned by

Flowable.bufferSize().

- Backpressure:

- The operator honors backpressure from the downstream and

requests

Flowable.bufferSize()amount from each rail, then requests from each rail 75% of this amount after 75% received. - Scheduler:

sequentialdoes not operate by default on a particularScheduler.

- Returns:

- the new

Flowableinstance - See Also:

sequential(int),sequentialDelayError()

-

sequential

@BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @CheckReturnValue @NonNull public final @NonNull Flowable<T> sequential(int prefetch)

Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a give prefetch value for the rails.

- Backpressure:

- The operator honors backpressure from the downstream and

requests the

prefetchamount from each rail, then requests from each rail 75% of this amount after 75% received. - Scheduler:

sequentialdoes not operate by default on a particularScheduler.

- Parameters:

prefetch- the prefetch amount to use for each rail- Returns:

- the new

Flowableinstance - Throws:

IllegalArgumentException- ifprefetchis non-positive- See Also:

sequential(),sequentialDelayError(int)

-

sequentialDelayError

@BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @CheckReturnValue @NonNull public final @NonNull Flowable<T> sequentialDelayError()

Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a default prefetch value for the rails and delaying errors from all rails till all terminate.This operator uses the default prefetch size returned by

Flowable.bufferSize().

- Backpressure:

- The operator honors backpressure from the downstream and

requests

Flowable.bufferSize()amount from each rail, then requests from each rail 75% of this amount after 75% received. - Scheduler:

sequentialDelayErrordoes not operate by default on a particularScheduler.

History: 2.0.7 - experimental

- Returns:

- the new

Flowableinstance - Since:

- 2.2

- See Also:

sequentialDelayError(int),sequential()

-

sequentialDelayError

@BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @CheckReturnValue @NonNull public final @NonNull Flowable<T> sequentialDelayError(int prefetch)

Merges the values from each 'rail' in a round-robin or same-order fashion and exposes it as a regularFlowablesequence, running with a give prefetch value for the rails and delaying errors from all rails till all terminate.

- Backpressure:

- The operator honors backpressure from the downstream and

requests the

prefetchamount from each rail, then requests from each rail 75% of this amount after 75% received. - Scheduler:

sequentialDelayErrordoes not operate by default on a particularScheduler.

History: 2.0.7 - experimental

- Parameters:

prefetch- the prefetch amount to use for each rail- Returns:

- the new

Flowableinstance - Throws:

IllegalArgumentException- ifprefetchis non-positive- Since:

- 2.2

- See Also:

sequential(),sequentialDelayError()

-

sorted

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final @NonNull Flowable<T> sorted(@NonNull @NonNull Comparator<? super T> comparator)

Sorts the 'rails' of thisParallelFlowableand returns aFlowablethat sequentially picks the smallest next value from the rails.This operator requires a finite source

ParallelFlowable.- Backpressure:

- The operator honors backpressure from the downstream and

consumes the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

sorteddoes not operate by default on a particularScheduler.

- Parameters:

comparator- the comparator to use- Returns:

- the new

Flowableinstance - Throws:

NullPointerException- ifcomparatorisnull

-

sorted

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final @NonNull Flowable<T> sorted(@NonNull @NonNull Comparator<? super T> comparator, int capacityHint)

Sorts the 'rails' of thisParallelFlowableand returns aFlowablethat sequentially picks the smallest next value from the rails.This operator requires a finite source

ParallelFlowable.- Backpressure:

- The operator honors backpressure from the downstream and

consumes the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

sorteddoes not operate by default on a particularScheduler.

- Parameters:

comparator- the comparator to usecapacityHint- the expected number of total elements- Returns:

- the new

Flowableinstance - Throws:

NullPointerException- ifcomparatorisnullIllegalArgumentException- ifcapacityHintis non-positive

-

toSortedList

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final @NonNull Flowable<List<T>> toSortedList(@NonNull @NonNull Comparator<? super T> comparator)

Sorts the 'rails' according to the comparator and returns a full sortedListas aFlowable.This operator requires a finite source

ParallelFlowable.- Backpressure:

- The operator honors backpressure from the downstream and

consumes the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

toSortedListdoes not operate by default on a particularScheduler.

- Parameters:

comparator- the comparator to compare elements- Returns:

- the new

Flowableinstance - Throws:

NullPointerException- ifcomparatorisnull

-

toSortedList

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final @NonNull Flowable<List<T>> toSortedList(@NonNull @NonNull Comparator<? super T> comparator, int capacityHint)

Sorts the 'rails' according to the comparator and returns a full sortedListas aFlowable.This operator requires a finite source

ParallelFlowable.- Backpressure:

- The operator honors backpressure from the downstream and

consumes the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

toSortedListdoes not operate by default on a particularScheduler.

- Parameters:

comparator- the comparator to compare elementscapacityHint- the expected number of total elements- Returns:

- the new

Flowableinstance - Throws:

NullPointerException- ifcomparatorisnullIllegalArgumentException- ifcapacityHintis non-positive

-

doOnNext

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnNext(@NonNull @NonNull Consumer<? super T> onNext)

Call the specified consumer with the current element passing through any 'rail'.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onNext- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonNextisnull

-

doOnNext

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnNext(@NonNull @NonNull Consumer<? super T> onNext, @NonNull @NonNull ParallelFailureHandling errorHandler)

Call the specified consumer with the current element passing through any 'rail' and handles errors based on the givenParallelFailureHandlingenumeration value.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Parameters:

onNext- the callbackerrorHandler- the enumeration that defines how to handle errors thrown from theonNextconsumer- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonNextorerrorHandlerisnull- Since:

- 2.2

-

doOnNext

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnNext(@NonNull @NonNull Consumer<? super T> onNext, @NonNull @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)

Call the specified consumer with the current element passing through any 'rail' and handles errors based on the returned value by the handler function.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Parameters:

onNext- the callbackerrorHandler- the function called with the current repeat count and failureThrowableand should return one of theParallelFailureHandlingenumeration values to indicate how to proceed.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonNextorerrorHandlerisnull- Since:

- 2.2

-

doAfterNext

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doAfterNext(@NonNull @NonNull Consumer<? super T> onAfterNext)

Call the specified consumer with the current element passing through any 'rail' after it has been delivered to downstream within the rail.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onAfterNext- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonAfterNextisnull

-

doOnError

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnError(@NonNull @NonNull Consumer<? super Throwable> onError)

Call the specified consumer with the exception passing through any 'rail'.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onError- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonErrorisnull

-

doOnComplete

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnComplete(@NonNull @NonNull Action onComplete)

Run the specifiedActionwhen a 'rail' completes.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onComplete- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonCompleteisnull

-

doAfterTerminated

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doAfterTerminated(@NonNull @NonNull Action onAfterTerminate)

Run the specifiedActionwhen a 'rail' completes or signals an error.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onAfterTerminate- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonAfterTerminateisnull

-

doOnSubscribe

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnSubscribe(@NonNull @NonNull Consumer<? super Subscription> onSubscribe)

Call the specified callback when a 'rail' receives aSubscriptionfrom its upstream.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onSubscribe- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonSubscribeisnull

-

doOnRequest

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final @NonNull ParallelFlowable<T> doOnRequest(@NonNull @NonNull LongConsumer onRequest)

Call the specified consumer with the request amount if any rail receives a request.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onRequest- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonRequestisnull

-

doOnCancel

@BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") @CheckReturnValue @NonNull public final @NonNull ParallelFlowable<T> doOnCancel(@NonNull @NonNull Action onCancel)

Run the specifiedActionwhen a 'rail' receives a cancellation.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Parameters:

onCancel- the callback- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifonCancelisnull

-

collect

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final <C> @NonNull ParallelFlowable<C> collect(@NonNull @NonNull Supplier<? extends C> collectionSupplier, @NonNull @NonNull BiConsumer<? super C,? super T> collector)

Collect the elements in each rail into a collection supplied via acollectionSupplierand collected into with a collector action, emitting the collection at the end.- Backpressure:

- The operator honors backpressure from the downstream rails and

consumes the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

mapdoes not operate by default on a particularScheduler.

- Type Parameters:

C- the collection type- Parameters:

collectionSupplier- the supplier of the collection in each railcollector- the collector, taking the per-rail collection and the current item- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifcollectionSupplierorcollectorisnull

-

fromArray

@CheckReturnValue @NonNull @SafeVarargs @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public static <T> @NonNull ParallelFlowable<T> fromArray(@NonNull Publisher<T>... publishers)

Wraps multiplePublishers into aParallelFlowablewhich runs them in parallel and unordered.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Type Parameters:

T- the value type- Parameters:

publishers- the array of publishers- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifpublishersisnullIllegalArgumentException- ifpublishersis an empty array

-

to

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final <R> R to(@NonNull @NonNull ParallelFlowableConverter<T,R> converter)

Calls the specified converter function during assembly time and returns its resulting value.This allows fluent conversion to any other type.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by how the converter function composes over the upstream source.

- Scheduler:

todoes not operate by default on a particularScheduler.

History: 2.1.7 - experimental

- Type Parameters:

R- the resulting object type- Parameters:

converter- the function that receives the currentParallelFlowableinstance and returns a value- Returns:

- the converted value

- Throws:

NullPointerException- ifconverterisnull- Since:

- 2.2

-

compose

@CheckReturnValue @NonNull @BackpressureSupport(value=PASS_THROUGH) @SchedulerSupport(value="none") public final <U> @NonNull ParallelFlowable<U> compose(@NonNull @NonNull ParallelTransformer<T,U> composer)

Allows composing operators, in assembly time, on top of thisParallelFlowableand returns anotherParallelFlowablewith composed features.- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by how the converter function composes over the upstream source.

- Scheduler:

composedoes not operate by default on a particularScheduler.

- Type Parameters:

U- the output value type- Parameters:

composer- the composer function fromParallelFlowable(this) to anotherParallelFlowable- Returns:

- the

ParallelFlowablereturned by the function - Throws:

NullPointerException- ifcomposerisnull

-

flatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> flatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper)

Generates and flattensPublishers on each 'rail'.The errors are not delayed and uses unbounded concurrency along with default inner prefetch.

- Backpressure:

- The operator honors backpressure from the downstream rails and

requests

Flowable.bufferSize()amount from each rail upfront and keeps requesting as many items per rail as many inner sources on that rail completed. The inner sources are requestedFlowable.bufferSize()amount upfront, then 75% of this amount requested after 75% received. - Scheduler:

flatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisher- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull

-

flatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> flatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError)

Generates and flattensPublishers on each 'rail', optionally delaying errors.It uses unbounded concurrency along with default inner prefetch.

- Backpressure:

- The operator honors backpressure from the downstream rails and

requests

Flowable.bufferSize()amount from each rail upfront and keeps requesting as many items per rail as many inner sources on that rail completed. The inner sources are requestedFlowable.bufferSize()amount upfront, then 75% of this amount requested after 75% received. - Scheduler:

flatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisherdelayError- should the errors from the main and the inner sources delayed till everybody terminates?- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull

-

flatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> flatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError, int maxConcurrency)

Generates and flattensPublishers on each 'rail', optionally delaying errors and having a total number of simultaneous subscriptions to the innerPublishers.It uses a default inner prefetch.

- Backpressure:

- The operator honors backpressure from the downstream rails and

requests

maxConcurrencyamount from each rail upfront and keeps requesting as many items per rail as many inner sources on that rail completed. The inner sources are requestedFlowable.bufferSize()amount upfront, then 75% of this amount requested after 75% received. - Scheduler:

flatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisherdelayError- should the errors from the main and the inner sources delayed till everybody terminates?maxConcurrency- the maximum number of simultaneous subscriptions to the generated innerPublishers- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifmaxConcurrencyis non-positive

-

flatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> flatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean delayError, int maxConcurrency, int prefetch)

Generates and flattensPublishers on each 'rail', optionally delaying errors, having a total number of simultaneous subscriptions to the innerPublishers and using the given prefetch amount for the innerPublishers.- Backpressure:

- The operator honors backpressure from the downstream rails and

requests

maxConcurrencyamount from each rail upfront and keeps requesting as many items per rail as many inner sources on that rail completed. The inner sources are requested theprefetchamount upfront, then 75% of this amount requested after 75% received. - Scheduler:

flatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisherdelayError- should the errors from the main and the inner sources delayed till everybody terminates?maxConcurrency- the maximum number of simultaneous subscriptions to the generated innerPublishersprefetch- the number of items to prefetch from each innerPublisher- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifmaxConcurrencyorprefetchis non-positive

-

concatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> concatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper)

Generates and concatenatesPublishers on each 'rail', signalling errors immediately and generating 2 publishers upfront.- Backpressure:

- The operator honors backpressure from the downstream rails and requests 2 from each rail upfront and keeps requesting 1 when the inner source complete. Requests for the inner sources are determined by the downstream rails' backpressure behavior.

- Scheduler:

concatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublishersource and the innerPublishers (immediate, boundary, end)- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull

-

concatMap

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> concatMap(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, int prefetch)

Generates and concatenatesPublishers on each 'rail', signalling errors immediately and using the given prefetch amount for generatingPublishers upfront.- Backpressure:

- The operator honors backpressure from the downstream rails and

requests the

prefetchamount from each rail upfront and keeps requesting 75% of this amount after 75% received and the inner sources completed. Requests for the inner sources are determined by the downstream rails' backpressure behavior. - Scheduler:

concatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisherprefetch- the number of items to prefetch from each innerPublishersource and the innerPublishers (immediate, boundary, end)- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifprefetchis non-positive

-

concatMapDelayError

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> concatMapDelayError(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, boolean tillTheEnd)

Generates and concatenatesPublishers on each 'rail', optionally delaying errors and generating 2 publishers upfront.- Backpressure:

- The operator honors backpressure from the downstream rails and requests 2 from each rail upfront and keeps requesting 1 when the inner source complete. Requests for the inner sources are determined by the downstream rails' backpressure behavior.

- Scheduler:

concatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublishertillTheEnd- iftrue, all errors from the upstream and innerPublishers are delayed till all of them terminate, iffalse, the error is emitted when an innerPublisherterminates. source and the innerPublishers (immediate, boundary, end)- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull

-

concatMapDelayError

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <R> @NonNull ParallelFlowable<R> concatMapDelayError(@NonNull @NonNull Function<? super T,? extends Publisher<? extends R>> mapper, int prefetch, boolean tillTheEnd)

Generates and concatenatesPublishers on each 'rail', optionally delaying errors and using the given prefetch amount for generatingPublishers upfront.- Backpressure:

- The operator honors backpressure from the downstream rails and

requests the

prefetchamount from each rail upfront and keeps requesting 75% of this amount after 75% received and the inner sources completed. Requests for the inner sources are determined by the downstream rails' backpressure behavior. - Scheduler:

concatMapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the result type- Parameters:

mapper- the function to map each rail's value into aPublisherprefetch- the number of items to prefetch from each innerPublishertillTheEnd- iftrue, all errors from the upstream and innerPublishers are delayed till all of them terminate, iffalse, the error is emitted when an innerPublisherterminates.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifprefetchis non-positive

-

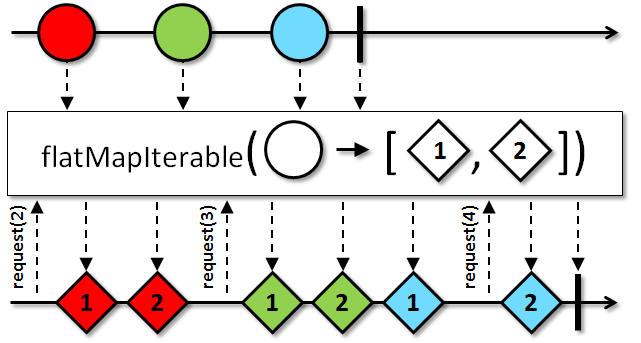

flatMapIterable

@CheckReturnValue @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @NonNull public final <U> @NonNull ParallelFlowable<U> flatMapIterable(@NonNull @NonNull Function<? super T,? extends Iterable<? extends U>> mapper)

Returns aParallelFlowablethat merges each item emitted by the source on each rail with the values in anIterablecorresponding to that item that is generated by a selector.

- Backpressure:

- The operator honors backpressure from each downstream rail. The source

ParallelFlowables is expected to honor backpressure as well. If the sourceParallelFlowableviolates the rule, the operator will signal aMissingBackpressureException. - Scheduler:

flatMapIterabledoes not operate by default on a particularScheduler.

- Type Parameters:

U- the type of item emitted by the resultingIterable- Parameters:

mapper- a function that returns anIterablesequence of values for when given an item emitted by the sourceParallelFlowable- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull- Since:

- 3.0.0

- See Also:

- ReactiveX operators documentation: FlatMap,

flatMapStream(Function)

-

flatMapIterable

@CheckReturnValue @NonNull @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") public final <U> @NonNull ParallelFlowable<U> flatMapIterable(@NonNull @NonNull Function<? super T,? extends Iterable<? extends U>> mapper, int bufferSize)

Returns aParallelFlowablethat merges each item emitted by the sourceParallelFlowablewith the values in anIterablecorresponding to that item that is generated by a selector.

- Backpressure:

- The operator honors backpressure from each downstream rail. The source

ParallelFlowables is expected to honor backpressure as well. If the sourceParallelFlowableviolates the rule, the operator will signal aMissingBackpressureException. - Scheduler:

flatMapIterabledoes not operate by default on a particularScheduler.

- Type Parameters:

U- the type of item emitted by the resultingIterable- Parameters:

mapper- a function that returns anIterablesequence of values for when given an item emitted by the sourceParallelFlowablebufferSize- the number of elements to prefetch from each upstream rail- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifbufferSizeis non-positive- Since:

- 3.0.0

- See Also:

- ReactiveX operators documentation: FlatMap,

flatMapStream(Function, int)

-

mapOptional

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> mapOptional(@NonNull @NonNull Function<? super T,Optional<? extends R>> mapper)

Maps the source values on each 'rail' to an optional and emits its value if any.Note that the same mapper function may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into optional of Rs.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull- Since:

- 3.0.0

-

mapOptional

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> mapOptional(@NonNull @NonNull Function<? super T,Optional<? extends R>> mapper, @NonNull @NonNull ParallelFailureHandling errorHandler)

Maps the source values on each 'rail' to an optional and emits its value if any and handles errors based on the givenParallelFailureHandlingenumeration value.Note that the same mapper function may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into optional of Rs.errorHandler- the enumeration that defines how to handle errors thrown from the mapper function- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperorerrorHandlerisnull- Since:

- 3.0.0

-

mapOptional

@CheckReturnValue @NonNull @SchedulerSupport(value="none") @BackpressureSupport(value=PASS_THROUGH) public final <R> @NonNull ParallelFlowable<R> mapOptional(@NonNull @NonNull Function<? super T,Optional<? extends R>> mapper, @NonNull @NonNull BiFunction<? super Long,? super Throwable,ParallelFailureHandling> errorHandler)

Maps the source values on each 'rail' to an optional and emits its value if any and handles errors based on the returned value by the handler function.Note that the same mapper function may be called from multiple threads concurrently.

- Backpressure:

- The operator is a pass-through for backpressure and the behavior is determined by the upstream and downstream rail behaviors.

- Scheduler:

mapdoes not operate by default on a particularScheduler.

History: 2.0.8 - experimental

- Type Parameters:

R- the output value type- Parameters:

mapper- the mapper function turning Ts into optional of Rs.errorHandler- the function called with the current repeat count and failureThrowableand should return one of theParallelFailureHandlingenumeration values to indicate how to proceed.- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperorerrorHandlerisnull- Since:

- 3.0.0

-

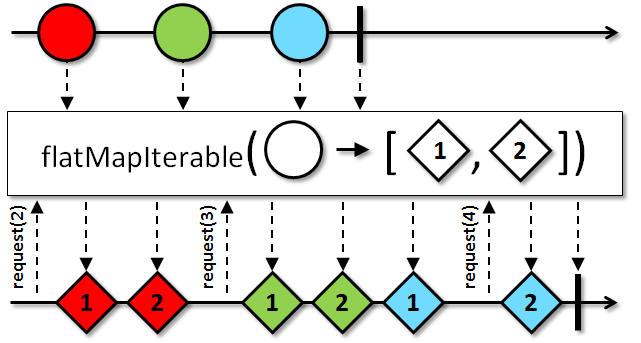

flatMapStream

@CheckReturnValue @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @NonNull public final <R> @NonNull ParallelFlowable<R> flatMapStream(@NonNull @NonNull Function<? super T,? extends Stream<? extends R>> mapper)

Maps each upstream item on each rail into aStreamand emits theStream's items to the downstream in a sequential fashion.

Due to the blocking and sequential nature of Java

Streams, the streams are mapped and consumed in a sequential fashion without interleaving (unlike a more generalflatMap(Function)). Therefore,flatMapStreamandconcatMapStreamare identical operators and are provided as aliases.The operator closes the

Streamupon cancellation and when it terminates. The exceptions raised when closing aStreamare routed to the global error handler (RxJavaPlugins.onError(Throwable). If aStreamshould not be closed, turn it into anIterableand useflatMapIterable(Function):source.flatMapIterable(v -> createStream(v)::iterator);Note that

Streams can be consumed only once; any subsequent attempt to consume aStreamwill result in anIllegalStateException.Primitive streams are not supported and items have to be boxed manually (e.g., via

IntStream.boxed()):source.flatMapStream(v -> IntStream.rangeClosed(v + 1, v + 10).boxed());Streamdoes not support concurrent usage so creating and/or consuming the same instance multiple times from multiple threads can lead to undefined behavior.- Backpressure:

- The operator honors the downstream backpressure and consumes the inner stream only on demand. The operator

prefetches

Flowable.bufferSize()items of the upstream (then 75% of it after the 75% received) and caches them until they are ready to be mapped intoStreams after the currentStreamhas been consumed. - Scheduler:

flatMapStreamdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the element type of theStreams and the result- Parameters:

mapper- the function that receives an upstream item and should return aStreamwhose elements will be emitted to the downstream- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnull- Since:

- 3.0.0

- See Also:

flatMap(Function),flatMapIterable(Function),flatMapStream(Function, int)

-

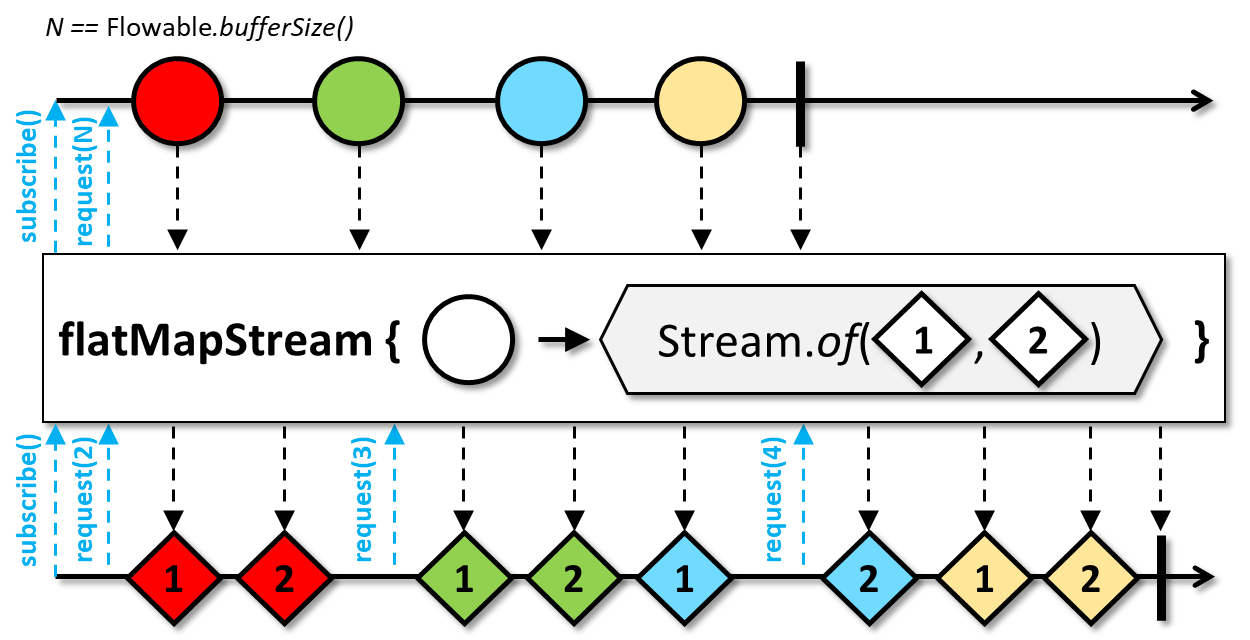

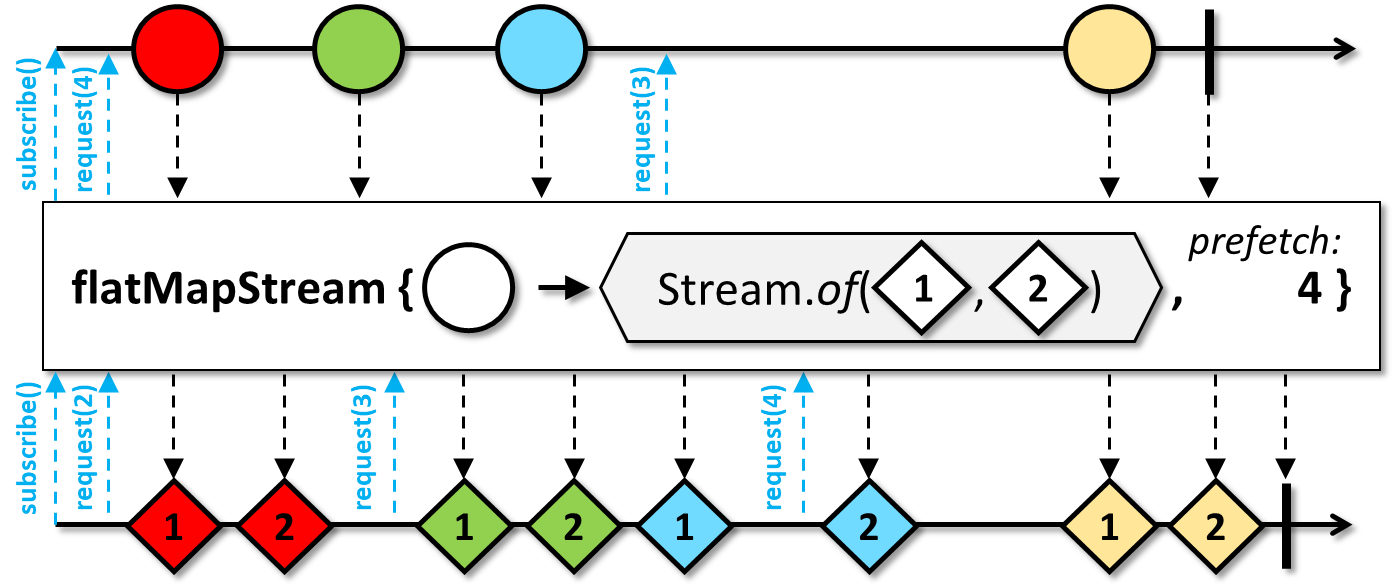

flatMapStream

@CheckReturnValue @BackpressureSupport(value=FULL) @SchedulerSupport(value="none") @NonNull public final <R> @NonNull ParallelFlowable<R> flatMapStream(@NonNull @NonNull Function<? super T,? extends Stream<? extends R>> mapper, int prefetch)

Maps each upstream item of each rail into aStreamand emits theStream's items to the downstream in a sequential fashion.

Due to the blocking and sequential nature of Java

Streams, the streams are mapped and consumed in a sequential fashion without interleaving (unlike a more generalflatMap(Function)). Therefore,flatMapStreamandconcatMapStreamare identical operators and are provided as aliases.The operator closes the

Streamupon cancellation and when it terminates. The exceptions raised when closing aStreamare routed to the global error handler (RxJavaPlugins.onError(Throwable). If aStreamshould not be closed, turn it into anIterableand useflatMapIterable(Function, int):source.flatMapIterable(v -> createStream(v)::iterator, 32);Note that

Streams can be consumed only once; any subsequent attempt to consume aStreamwill result in anIllegalStateException.Primitive streams are not supported and items have to be boxed manually (e.g., via

IntStream.boxed()):source.flatMapStream(v -> IntStream.rangeClosed(v + 1, v + 10).boxed(), 32);Streamdoes not support concurrent usage so creating and/or consuming the same instance multiple times from multiple threads can lead to undefined behavior.- Backpressure:

- The operator honors the downstream backpressure and consumes the inner stream only on demand. The operator

prefetches the given amount of upstream items and caches them until they are ready to be mapped into

Streams after the currentStreamhas been consumed. - Scheduler:

flatMapStreamdoes not operate by default on a particularScheduler.

- Type Parameters:

R- the element type of theStreams and the result- Parameters:

mapper- the function that receives an upstream item and should return aStreamwhose elements will be emitted to the downstreamprefetch- the number of upstream items to request upfront, then 75% of this amount after each 75% upstream items received- Returns:

- the new

ParallelFlowableinstance - Throws:

NullPointerException- ifmapperisnullIllegalArgumentException- ifprefetchis non-positive- Since:

- 3.0.0

- See Also:

flatMap(Function, boolean, int),flatMapIterable(Function, int)

-

collect

@CheckReturnValue @NonNull @BackpressureSupport(value=UNBOUNDED_IN) @SchedulerSupport(value="none") public final <A,R> @NonNull Flowable<R> collect(@NonNull @NonNull Collector<T,A,R> collector)

Reduces all values within a 'rail' and across 'rails' with a callbacks of the givenCollectorinto oneFlowablecontaining a single value.Each parallel rail receives its own

Collector.accumulator()andCollector.combiner().- Backpressure:

- The operator honors backpressure from the downstream and consumes

the upstream rails in an unbounded manner (requesting

Long.MAX_VALUE). - Scheduler:

collectdoes not operate by default on a particularScheduler.

- Type Parameters:

A- the accumulator typeR- the output value type- Parameters:

collector- theCollectorinstance- Returns:

- the new

Flowableinstance emitting the collected value. - Throws:

NullPointerException- ifcollectorisnull- Since:

- 3.0.0

-

-